I’ve had a few questions about 802.1q trunk ports over NSX-T overlay segments. Normally the question are around using a trunk port to connect a firewall VM to get around the 10 network interface limit on VMware VMs. Unfortunately that type of configuration has one major issue. The VMs being secured must do VLAN tagging on their interfaces. With this configuration a simple change on the guest OS defeats the security measures and allows the VM to connect to a different network. The VM interfaces may also sniff traffic on different networks with the configuration.

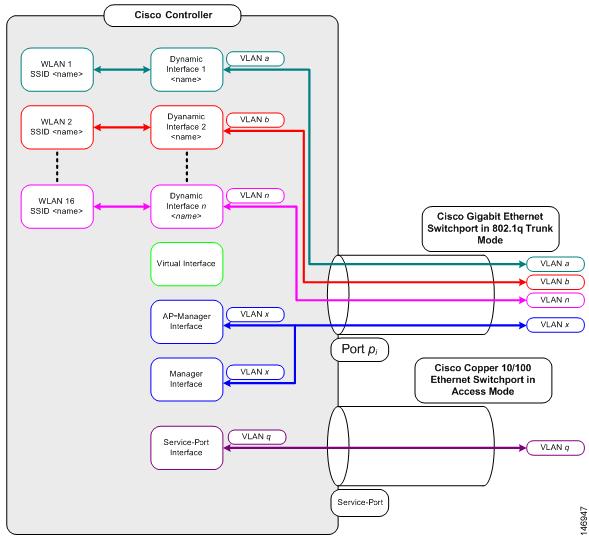

Recently I had a different ask for this type of configuration. How can you deploy a Cisco WLC with multiple SSIDs to an AVS cloud. The WLC accepts encapsulated traffic from access points, de-encapsulates them and places the different SSID traffic on different VLANs. There is a great picture on the Cisco website that describes how this works:

Since their is no guest besides the WLC and this is outside of the endpoint’s (wireless client) control there is no way for the endpoint to affect the device. This type of configuration works fine in an on premises datacenter because the VM portgroup can be configured as a trunk port. The ESXi host will dump the packets on the physical switch with the 802.1q tags in the header and the switch will take it from there.

Cisco offers a cloud solution for this product: https://www.cisco.com/c/en/us/products/collateral/wireless/catalyst-9800-cl-wireless-controller-cloud/nb-06-cat9800-cl-cloud-wirel-data-sheet-ctp-en.html. The drawback with running this in the public cloud is that all traffic must be switched locally to the access point. This requires configuration on all switches, routers, or firewalls where the access points may be connected. With support for up to 6000 access points you can see where this might become a problem. The drawback to the traditional appliance deployed in the cloud is that all traffic is tunneled to the cloud. This adds additional latency and complexity. Personally I wouldn’t want to run this long term with this configuration but due to the nature of the ask I thought providing a solution was the best thing for all involved.

NSX-T allows for the addition of VLANs to an overlay backed segment. To do this just add a list of VLAN IDs to the VLAN field:

In this example VLANs 2,3, and 4 have been added. Do not connect this segment to a gateway or assign a Subnet. This configuration essentially makes the segment a trunk virtual wire. Any VM that is connected to this segment can transmit and receive tagged packets on the configured VLANs. These will be encapsulated in a Geneve packet across the physical network. One other change required is a different MAC Learning Policy. If you are using a new deployment of NSX-T 3.2 there is no change required. For previous versions and upgrades a new policy must be made that enabled Unknown Unicast Flooding and assigned to the trunk segment.

Now VMs can be attached to this segment, they can tag their packets with the appropriate VLANs and they can communicate. In order to get Layer 3 communication a VM must be configured as a router connected to this segment and another segment. The router VM is configured with VLAN interfaces on the NIC connected to the trunk segment. The second interface on the router VM is connected to a “normal” NSX-T segment connected to a T1 with a Subnet assigned. The default gateway of the router VM is configured as the NSX-T T1 IP. The T1 gets a static route for all of the VLAN interfaces configured with the next hop as the router VM’s normal interface. With all of this done the VLANs are now routable to the rest of the network. This is a crude diagram of the configuration:

This configuration is probably not supported by Cisco nor VMware but it does function. One of the unknowns from my perspective is the MAC Limit on the NSX-T port. If all of the wireless MACs show up on this port it will more than likely exceed the 4096 limit. If the WLC changes the MAC on the packets to it’s own then there shouldn’t be an issue with the MAC Limit.

Awesome. Many thanks for doing the hard work figuring this out and publishing the how-to. most appreciated.

LikeLike