While I was talking to my new teammates at Microsoft here in the AVS (Azure VMware Solution) team about some ideas the team had for things we could do I came up with a neat experiment. Can I vMotion a VM from an AVS Cloud to my car? Some people ask why. I ask why not? My idea centered around using HCX to push a VM down through a cell connection to a small installation I could power and run while driving through an area with 5G coverage. When I came up with this idea earlier in the week I had just pre-ordered a new iPhone that was 5G capable. For the rest of the week I set out to find some hardware that would work in the car.

I called around to some people I knew until someone said they had a NUC that would probably work. So I met him at Top Golf and picked it up. Unfortunately I didn’t have time to hit some balls and had to run as soon as I got the NUC. When I return it maybe there will be time to hit some balls.

My friend had a NUC6i7KYK. This has an Intel i7-6770HQ at 2.6GHZ. This is a 4 core hyper-threaded CPU. The NUC also has 64gb of memory and a couple of SSDs. This seemed like enough horse power to run what I needed to I build out a small environment. Since the AVS runs vSphere 6.7 I installed ESXi 6.7 U3 on the NUC. I installed vCenter, a router, and a jump box. I setup the networking on the host so that all of the components sit on an internal port group that has no access to any physical adapters. The only thing that can talk to the outside is a pfSense firewall/router. This machine has an uplink associated to it’s WAN interface. I forwarded RDP to the jump box for access to the environment and then moved the ESXi management to the internal vSwitch as well. To do this I made a new vmkernel port and updated DNS from the original IP to the inside IP. Then just flush DNS on vCenter and reconnect the host. The logical lay out looked like this

Once all this was configured I needed access to my AVS cloud. In VMC on AWS HCX gets a public IP address so no additional configuration is required. For AVS I had to work out connectivity. The easiest way was to connect my pfSense router via an IPSec tunnel. This was not very hard to configure. But it will only work for a static WAN IP. This turned out to be problematic. After some testing it became apparent that I would need to choose a different VPN that could use a dynamic IP easier. OpenVPN comes installed on pfSense. So I built an OpenVPN server on VM in Azure. That allowed me to connect with a dynamic IP very easily. This did cause some other issues. With an IPSEC connection to a vWAN the remote network becomes routable to and from AVS. The method I chose required NAT. A little iptables work on the Azure VM and I was NATing into my Azure network.

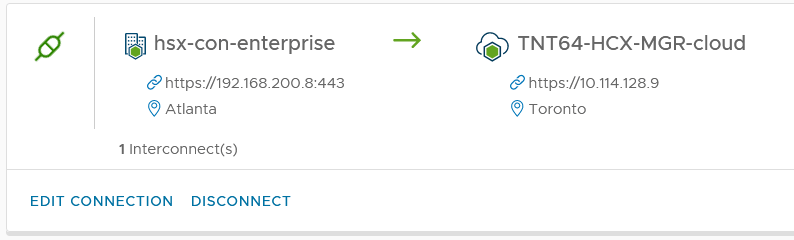

Once connectivity was up and working configuring HCX is pretty straight forward once you have connectivity between the appliances. They will allow for NAT from the source to the cloud but not the other way around. First download the HCX connector from the cloud HCX instance. Deploy the OVA then configure a site paring

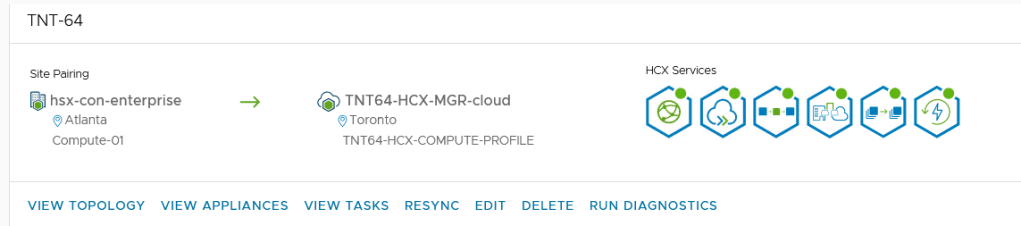

Next configure a service mesh. This asks a bunch of questions but really it’s just selecting compute and networks.

HCX also makes this super neat topology view:

Really not sure why that’s necessary but it looks neat. So once that was up the last thing was to configure a network extension. This did require that I added a VLAN tag to a fake network for the network extension. HCX requires that all extended networks have a VLAN tag. HCX also requires that the switch you are extending from have a network adapter connected. For my test I didn’t need one since it was all internal but I connected one and set it as unused on the internal network. I ran a test of a migration to cloud and back from my local internet connection and it worked fine.

Once this was all up it was time to get the cell part working. pfSense has an iPhone interface driver and seems to have worked in the past. For some reason I couldn’t get it to get an IP on the interface. Instead I passed a USB wifi dongle through to the pfSense VM and configured it in STA mode as a client to my personal hot spot. I do have a Netgear NightHawk 5G router that has an Ethernet port on it but it’s not activated and I didn’t want to go talk to AT&T.

After a few tests I found that I was unable to complete a vMotion. I kept getting odd timeouts on either the source or destination side. I believe this came down to the MTU set on the HCX uplink networks. I don’t know what the MTU on the ATT 5G or LTE is and I’m not sure how that handles fragmentation. There was also VPN overhead on the connection. With these two things in mind I set the MTU in the HCX uplink profile to 1300 on both sides. An MTU lower than 1350 requires HCX 4.2 or higher.

While I was testing I also found that my iPhone hot spot could only get to about 25mbps down. A native speed test on my phone ran at about 100mbps. The VMware documentation says the HCX requires 100mbps for a vMotion. Well who reads documentation anyways?

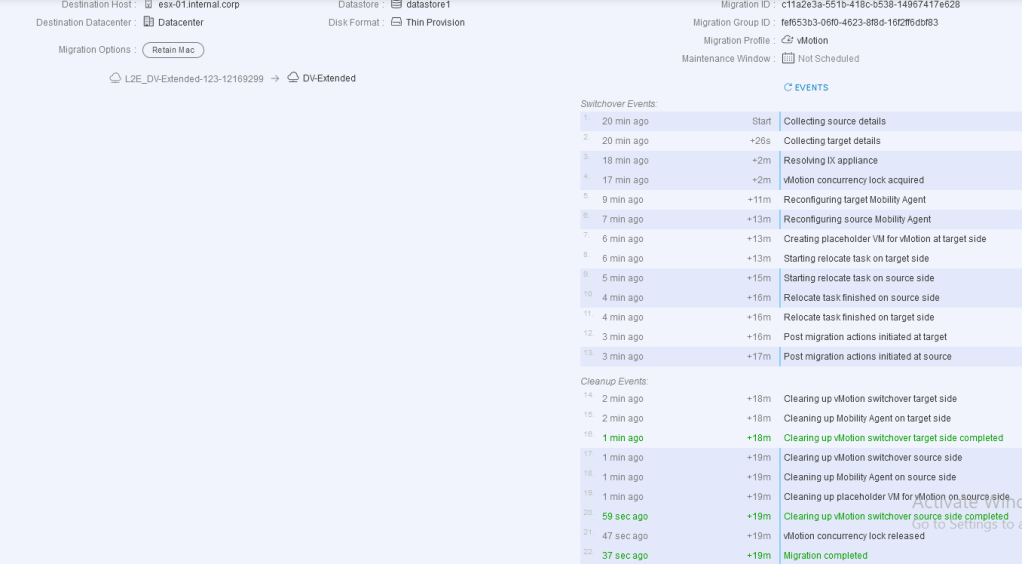

So does it work? Yes but very slowly. It took about 20 minutes to vMotion a tiny VM. I tried a VM with no hard drives to make it faster but HCX will fail if there is no disk attached to the VM. The VM I moved has 512mb of memory and a 1 GB disk(empty). I also reserved all the memory on the VM so the swap file would not be vMotioned. Another interesting requirement was EVC. Since the target CPUs were older than the source CPUs they needed EVC. In AVS you can’t set a cluster wide EVC level as that is managed by Microsoft. Luckily there is per VM EVC. Once I set this to the right level and cold booted the VM it worked. There was no precheck in HCX for this. The migration proceeded until it failed with an error about CPU features. Once this was set I tried again:

I believe with a better hotspot with native Ethernet like https://www.att.com/buy/connected-devices-and-more/netgear-nighthawk-lte-mobile-hotspot-router-512gb-steel-gray.html this would work much better. I have used this device for SD-WAN demos and was able to reach much higher speeds.

So now you know. You can in fact vMotion to a car.